Generative AI has become the need of today's era, shaping how we learn, work, and interact with technology. In this article, I'll explain RAG (Retrieval-Augmented Generation) in the simplest terms possible — so that anyone, whether a school student or a non-technical professional in any company, can easily understand it.

Generative AI is all around us — from chatbots that answer our questions to tools that can write stories, code, or even songs. But here's a secret: sometimes AI just guesses. And that can lead to wrong or outdated answers.

This is where RAG comes in. RAG stands for Retrieval-Augmented Generation. Don't worry about the big name — let's break it down.

Imagine This: The Exam Student

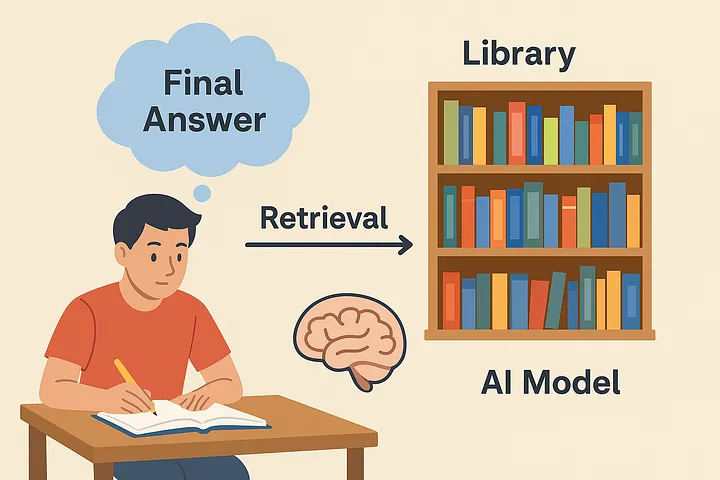

Think of a student preparing for an exam.

- They have already studied a lot (like an AI model that has been trained).

- But during the exam, if they forget a date or formula, they might just guess.

- Now, imagine if this student was allowed to open a textbook or search online during the exam. They'd definitely give more accurate answers, right?

That's exactly how RAG works.

How RAG Works (in plain English)

- Retrieval → The AI first looks up reliable information from books, documents, or databases (just like checking notes before answering).

- Generation → Then it uses that information to generate a well-formed, natural answer.

So instead of only depending on memory, the AI becomes like a student with access to a library + brain at the same time.

Everyday Example

- Without RAG: You ask your friend the cricket score. He tries to recall from memory but may get it wrong.

- With RAG: Your friend quickly checks a sports application and then tells you the exact score.

Simple, right?

But Wait… Hallucinations can still happen with RAG

Yes. RAG reduces hallucinations, but it doesn't completely remove them.

But What are hallucinations? — Hallucination in generative AI means the AI gives an answer that sounds correct but is actually made up or false.

Why can this still happen?

- If the system retrieves wrong or irrelevant documents, the AI might still produce a polished but incorrect answer.

- If the retrieval source itself has poor-quality data, the answer will be misleading.

👉 Think of it like a student: even with a textbook, if they open the wrong chapter, their answer will still be wrong!

That's why in real-world systems, it's important to carefully choose trusted data sources for RAG.

Why RAG Matters

- Better Accuracy: No more wild guesses.

- Up-to-date info: AI can answer using the latest data.

- Trustworthy answers: Sometimes, AI can even point you to the source, like a reference book or document.

One-Line Takeaway

👉 RAG = Memory + Library. AI doesn't just rely on what it remembers, it also looks things up before answering.

That's the power of RAG. It's like giving AI an open-book exam advantage.